What is a GPU and Why Do I Care? A Businessperson's Guide

Try HeavyIQ Conversational Analytics on 400 million tweets

Download HEAVY.AI Free, a full-featured version available for use at no cost.

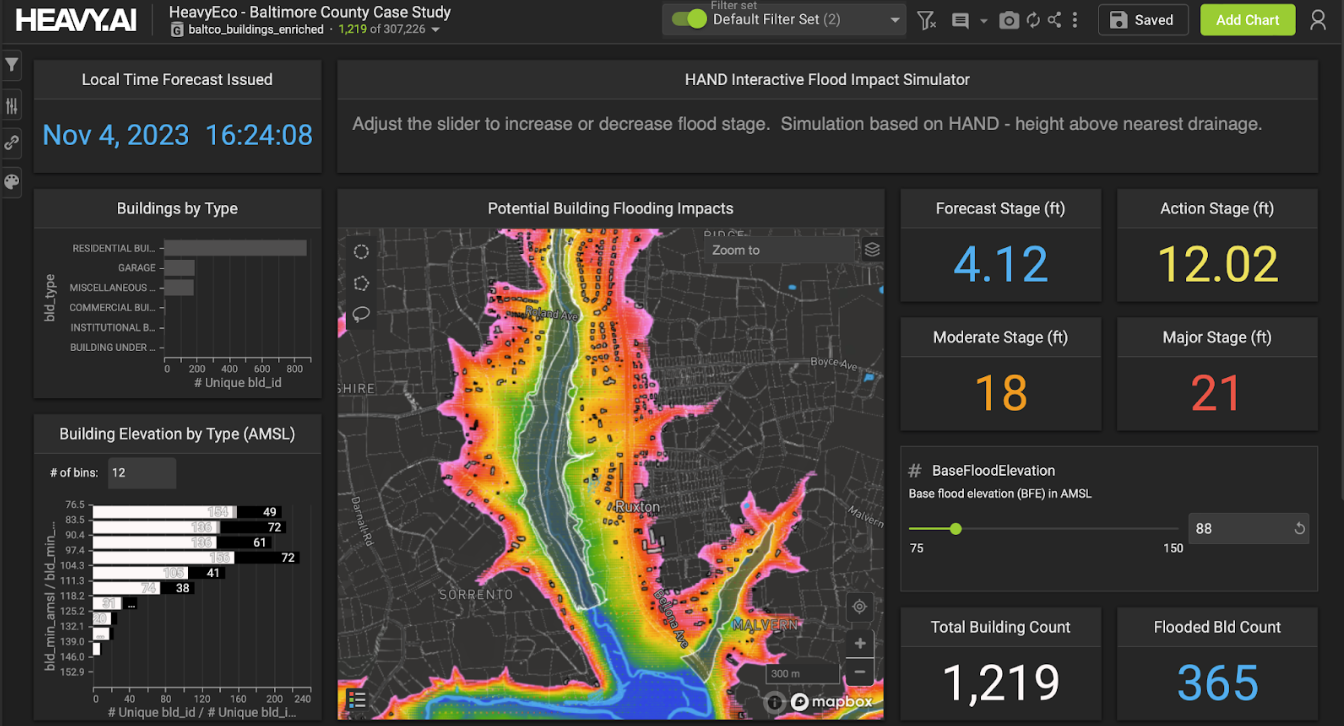

GET FREE LICENSEWhile 2016 was the year of the GPU for a number of reasons, the truth of the matter is that outside of some core disciplines (deep learning, virtual reality, autonomous vehicles) the reasons why you would use GPUs for general purpose computing applications remain somewhat unclear.

As a company whose products are tuned for this exceptional compute platform, we have a tendency to assume familiarity, often incorrectly.

Our New Year’s resolution is to explain, in language designed for business leaders, what a GPU is and why you should care.

Let’s get started by baselining on existing technology - the CPU. Most of us are familiar with a CPU. Intel is the primary manufacturer of these these chips and their gargantuan advertising budgets ensure that everyone knows that they power everything from laptops to supercomputers.

CPUs are designed to deliver low-latency processing for a variety of applications. CPUs are great for versatile tasks like spreadsheets, word processors and web applications. As a result, CPUs have traditionally been the go-to compute resource for most enterprises.

When your IT manager says they are ordering more computers or servers or adding capacity in the cloud, they have historically been talking about CPUs.

While highly versatile, CPUs are constrained in the number of “cores” that can be placed on a chip. Most consumer chips max out around 8, although enterprise-grade “Xeons” can have up to 22.

It has taken decades to progress from a single core to where we are today. Scaling a chip as complex as a CPU requires shrinking transistor size, heat generation and power consumption all at once. The success that has been achieved so far can be attributed in large part to the Herculean efforts of Intel and AMD’s talented engineers.

The net effect is that for optimized code, CPUs are seeing an approximately 20% improvement per year (measured in raw FLOPS).

This, it turns out, is important.

With limited processing gains on the horizon, CPUs are further and further behind the growth in data, which is increasing at double that rate (40%) and accelerating.

With data growth outstripping CPU processing growth you have to start resorting to tricks like downsampling and indexing or engage in expensive scale-out strategies to avoid waiting minutes or hours for a response.

We now deal in exabytes and are headed to zetabytes. The once massive terabyte is consumer grade, available at BestBuy for mere dollars. Enterprise grade pricing for Terabyte storage is in the single digits.

At these prices we store all of the data we manufacture and in doing so we create working sets that swamp the capabilities of CPU-grade compute.

So what does this have to do with GPUs?

Well, GPUs are constructed differently than CPUs. They are not nearly as versatile, but unlike CPUs they actually boast thousands of cores - which as we will find shortly is particularly important when it comes to dealing with large datasets. Since GPUs are single-mindedly designed around maximizing parallelism, the transistors that Moore’s Law grants chipmakers with every process shrink is translated directly into more cores, meaning GPUs are increasing their processing power by at least 40% per year, allowing them to keep pace with the growing deluge of data.

Back to the beginning

Since we are assuming that many of you are unfamiliar with GPUs, it may make sense to tell you how they got their start.

The reason GPUs exist at all is that engineers recognized in the nineties that rendering polygons to a screen was a fundamentally parallelizable problem - the color of each pixel could be computed independently of its neighbors. GPUs evolved as more efficient alternative to CPUs to render everything from zombies to racecars to a user’s screen.

As noted above, CPUs struggled and so GPUs were brought in to offload and specialize in those tasks.

GPUs solved the problem that CPUs couldn’t by making a chip with thousands of less sophisticated cores. With the right programming, these cores could perform the complex and massive number of mathematical calculations required to drive realistic gaming experiences.

GPU’s didn’t just add cores. Architects realized that the increased processing power of GPUs needed to be matched by faster memory, leading them to drive development of much higher-bandwidth variants of RAM. GPUs now boast an order-of-magnitude greater memory bandwidth than CPUs, ensuring that the cards scream both at reading data and processing it.

With this use case, GPUs began to take a foothold.

What happened next wasn’t in the plan - but will define the future of every industry and every aspect of our lives.

Enter the mad computer scientist

Turns out there is a pretty big crossover between gamers and computer scientists. Computer scientists realized that the ability to execute complex mathematical calculations across all of these cores had applications in high performance computing. Still it was difficult, really difficult, to write code that ran efficiently on GPUs. Researchers interested in harnessing the power of graphics cards had to hack their computations into the graphics API, literally making the card think that the calculations they wanted to perform were actually rendering calculations to determine the color of pixels.

That was, until 2007, when Nvidia introduced something called CUDA (Compute Unified Device Architecture). CUDA supports parallel programming in C meaning enterprising computer scientists (who grew up on C) can begin to write basic CUDA almost immediately, enabling a new class of tasks to be easily parallelized.

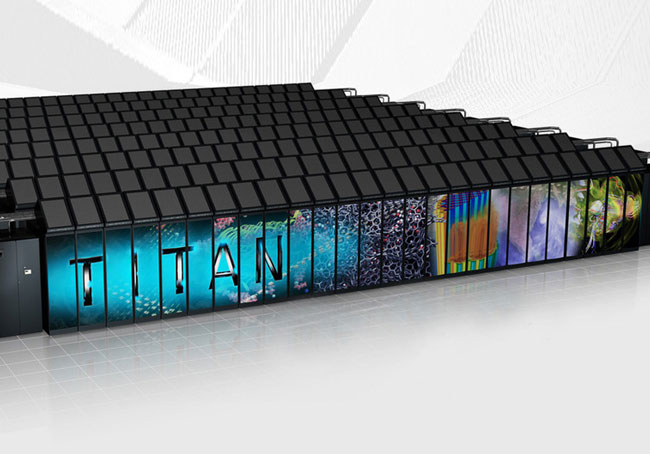

As a result, seemingly overnight, every supercomputer on the planet was running on GPUs; thereby lighting the match on the entire deep learning/autonomous vehicle/AI explosion.

Parallel Programming

You were probably with me up to the point about parallel programming.

Parallelization is actually key to understanding the power of GPUs.

Parallelization means that you can execute many calculations simultaneously versus sequentially. This means that large problems can often be divided into smaller ones, which can then be solved at the same time. Given the types of problems in high performance computing (weather/climate models, the birth of the universe, DNA sequencing), parallelism is a critical feature for the supercomputing crowd, but its allure and some major advances, have made it the approach of choice in many other domains of computing.

It is not just astrophysicists or climatologists that can reap the benefits of parallelism - it turns out many enterprise tasks benefit disproportionately from parallelism. For example:

- Database queries

- Brute-force searches in cryptography

- Computer simulations comparing many independent scenarios

- Machine learning/Deep learning

- Geo-visualization

Think about the big challenges in your organizations - the ones you don’t even consider due to their size or complexity but that could benefit from deeper analysis. I suspect that those tasks are parallelizable - and just because they are out of reach of CPU-grade compute does not mean they are out of reach for GPU-grade compute.

CPU vs. GPU Summary

So let’s summarize for a moment. GPU’s are different from CPUs in the following ways:

- A GPU has thousands of cores per chip. A CPU has up to 22.

- A GPU is tailored for highly parallel operations while a CPU is optimized for serialized computations.

- GPUs have significantly higher bandwidth memory interfaces than CPUs.

- A CPU is great for things like word processing, running transactional databases and powering web applications. GPUs are great for things like sequencing DNA, determining the origins of the universe or predicting what your customer wants to order next (as well as how much, what price and when).

The ROI + Productivity Equations

So the case has been made for why GPUs represent the future of computing, but what do the economics look like? After all this post is designed for a business audience.

The economics are very much in favor of GPUs as well. The reasons are manifold so let’s get started.

First off, a full GPU server will be more expensive than a fully loaded CPU server. While it is not apples to apples comparable, a full 8 GPU setup can run up to $80K whereas a moderately loaded CPU setup will run around $9K. Prices of course can vary depending on how much RAM and SSD are specified.

While it may seem like a massive difference in capex it is important to note the following - it would take 250 of those servers to match the compute power of that single GPU server. When you take that into consideration, the GPU server is about $2.1 million less expensive. But you have to network all those CPU machines at a cost of $1K per, so that is another $125K.

Managing 250 machines is no easy task either. Call that 20% of the total - another $200K per year.

Something like a Nvidia’s DGX-1 will fit into a 4RU space. Finding room for your 250 CPU servers is a little harder and a bit more expensive. Let’s call that another $30K per year.

Finally, there is heating and cooling. This is material and represents another incremental $250K per year.

All told, to support the “cheaper” CPU option is likely to cost another $2.5M in the first year, not to mention you have to tell your kids you did damage to the planet in the process.

Ultimately this decision will be predicated on your workloads and what you want to support, but if the workload is amenable to parallelization, the density and performance of the compute make the decision to go with GPU relatively straightforward from a ROI perspective.

The productivity play…

We have written extensively on the implications of latency in analytics. The basic argument is that when you have to wait seconds or minutes for a query to run or a screen to refresh you start to behave differently. You look for shortcuts, you run the query on smaller datasets, you only ask the questions you know will return quickly, you don’t explore - taking a few queries as directional.

GPU’s deliver exceptional performance. Minutes shrink to milliseconds. With millisecond latency, everything changes. Your most valuable intellectual resources (your analytical ones) get their answers hundreds of times faster than they would otherwise, so they dig deeper, they engage in speed of thought exploration, and ultimately they are more productive.

It is hard to measure productivity improvements, but think about how much more productive you are in a broadband world vs. a dial-up world. That is the type of productivity gap we are talking about.

But wait there is more…

While it is simple to order a GPU server these days, the truth of the matter is that it can be a bureaucratic and political undertaking. Fear not, over the past few quarters every major cloud service provider has rolled-out powerful GPU instances.

If buying your own hardware doesn’t appeal to your enterprise, you can procure the equivalent of a supercomputer for less than $15 an hour.

Not bad.

Seriously though…

This is generally the point where the business user expresses some skepticism along the lines of “where is the catch.”

Truth be told, there are catches, lots of them.

Not everything is parallelizable and as a result you don’t always experience the massive speedups like you do on databases, analytics, deep learning or other types of problems that lend themselves nicely to parallelization.

As a result, the economics don’t look as favorable either.

In the world of data, however, many of the problems that we blunt our proverbial compute pick on are embarrassingly parallel and thus the argument of faster, cheaper, better looking holds.

Closing thoughts….

This post was designed to explain why, as a business user, you should care about GPUs. It really resolves itself to the following:

- GPUs are the optimal compute platform for data analytics.

- We currently live in a world dominated by data.

- Because of this, the economics grossly favor GPUs.

- This doesn’t even count productivity gains.

- It’s really easy to get your hands on GPUs these days.

So take a moment to think about that one report that the BI team tell you takes three to five hours to run - if it completes at all. Or think about the one dashboard that you like but that turns into bunch of spinning pinwheels if you click on anything on the screen.

Finally, think of that awesome idea you had last quarter that started with “wouldn’t it be great if we could….” and ended with your analytics lead say “yes, yes it would be great, but our infrastructure can’t handle it.”

Those are the problems we want to talk to you about.